The hype surrounding artificial intelligence tutors is easy to get caught up in — but the evidence so far counsels restraint.

Several studies have found that AI chatbot tutors can actually backfire: students tend to over-rely on them, receiving ready-made solutions rather than genuinely grappling with material. Even AI tutors specifically designed to withhold direct answers have not consistently outperformed traditional, AI-free learning methods.

Yet the researchers behind these skeptical findings haven't abandoned the field. Many are still actively experimenting, searching for designs that actually work.

One of the more promising leads has less to do with how an AI tutor explains a concept and more to do with which problem it asks a student to tackle next.

A University of Pennsylvania team — including some researchers with AI-skeptic credentials — recently put this idea to the test in a study involving nearly 800 Taiwanese high school students learning Python programming. All participants used the same AI tutor, built with guardrails to prevent it from simply handing over answers.

The critical variable was sequencing. Half the students were randomly assigned a fixed progression of practice problems, moving from easier to harder in a predetermined order. The other half received a personalized sequence: the AI tutor continuously recalibrated each problem's difficulty based on how the student was performing and how they were engaging with the chatbot.

The concept draws on a well-established educational principle known as the "zone of proximal development." Problems that are too simple bore students; problems that are too daunting frustrate them. The sweet spot — challenging but achievable — is where meaningful learning happens.

Students in the personalized group outperformed those in the fixed-sequence group on a final exam. The researchers characterized the gap as equivalent to roughly 6 to 9 months of additional schooling — a striking claim for an after-school online course that ran just five months. The tutor's creator, Angel Chung, a doctoral student at the Wharton School, acknowledged that this conversion of statistical units is "not a perfect estimate." (A draft paper describing the experiment was posted online in March 2026 and has not yet appeared in a peer-reviewed journal.)

Even so, it is early evidence that a relatively modest design tweak — calibrating problem difficulty to the individual student — can meaningfully shift outcomes.

Chung noted that tools like ChatGPT already feel highly personalized, since they respond directly to each student's specific questions. But that kind of responsiveness has a fundamental limitation. "Students usually don't know what they don't know," she said. "The student doesn't have the ability to ask the right questions to get the best tutoring."

To close that gap, Chung's team layered a separate machine-learning algorithm on top of a large language model. The algorithm monitors how students interact with the course platform — tracking how they answer practice questions, how often they revise their code, and the quality of their chatbot conversations — and uses those signals to determine which problem to present next.

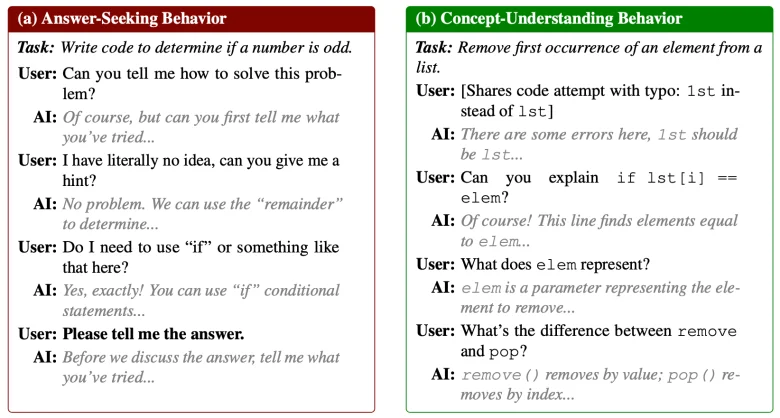

How different students interact with the chatbot tutor

In other words, true personalization isn't just about tailoring explanations — it's about tailoring the learning path itself.

That idea predates generative AI by decades. Long before ChatGPT, education researchers built "intelligent tutoring systems" that attempted something similar: estimating what a student understood and serving up the appropriate next problem. Those earlier systems couldn't hold natural conversations, but they could deliver hints and instant feedback. Rigorous evaluations found that well-designed versions produced significantly better learning outcomes.

Their persistent weakness was engagement. Many students simply didn't want to use them.

Modern AI tools may offer a remedy. A chatbot that converses in a nearly human way could hold students' attention far more effectively than earlier systems ever managed.

In the University of Pennsylvania study, students in the personalized group spent considerably more time practicing — about three additional minutes per problem — which added up to roughly an extra hour per module compared with their counterparts in the fixed-sequence group. The researchers believe this deeper engagement in practice was a key driver of their stronger results.

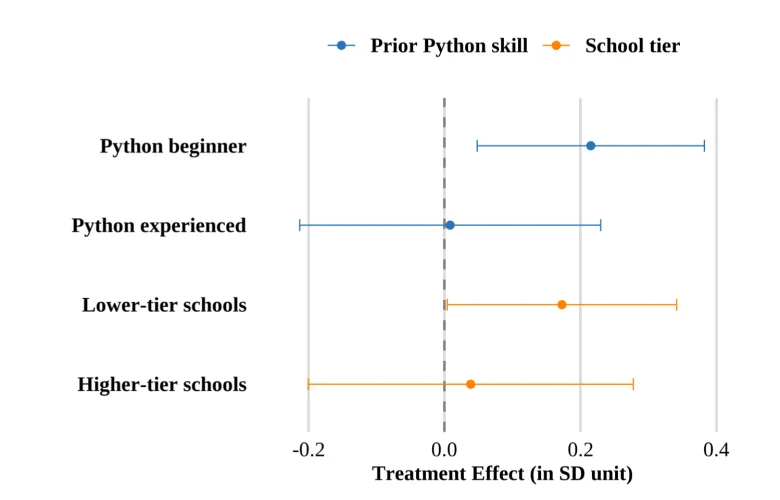

Prior knowledge also shaped who benefited most. Students new to Python gained more from the personalized sequencing than those who already had coding experience, who performed equally well under either approach. Students from less selective high schools also appeared to benefit disproportionately.

How students' background affected results

It's worth noting who these students were. All of the Taiwanese participants had voluntarily enrolled in an optional programming course — one that could bolster their college applications. Many were highly motivated, came from households with college-educated parents, and had some prior coding exposure.

Whether a similar approach would deliver results for less motivated students — those who are struggling academically and arguably most in need of support — remains an open question.

One potential path forward involves blending old and new approaches. Ken Koedinger, a professor at Carnegie Mellon University and a pioneer of intelligent tutoring systems, is experimenting with using new AI models to flag struggling, disengaged students for remote human tutors who can step in and provide motivation. "We are having more success," Koedinger said.

Humans, it seems, aren't obsolete — not yet.

Contact staff writer Jill Barshay at 212-678-3595, jillbarshay.35 on Signal, or [email protected].

This story about AI tutors was produced by The Hechinger Report, a nonprofit, independent news organization focused on education. Sign up for Proof Points and other Hechinger newsletters.

The post The quest to build a better AI tutor appeared first on The Hechinger Report.